I have great news to share with you. Calc’s ODS import filter in 3.5 should be substantially faster when you have documents with a large number of named ranges. Read on if you want to know more details.

What happened?

Laurent Godard, Markus Mohrhard, and myself have been working pretty hard in the past month to bring the performance of ODS import filter to a reasonable level, especially with documents containing a large number of named ranges.

Here is the background. Laurent uses LibreOffice as a platform for his professional extension, which makes heavy use of named ranges. It programmatically generates ODS documents and inserts hundred’s or thousand’s of named ranges as intermediary storage to further process the data. The problem was, however, our import performance with that kind of documents was so suboptimal that this process was taking a prohibitively long time. In order for his extension to perform optimally, our ODS import filter needed to be optimized, and optimized heavily.

During the Paris conference, we got our heads together in order to come up with a strategy to make that happen. Laurent was more than willing to participate this effort, and in the end, he did substantial amount of work profiling, analyzing code, coming up with optimization strategy and putting it altogether. Markus and I provided mentorship, code pointers, as well as occasional coding to accelerate this effort.

Our hope was to make it all happen in time for our first 3.5 release. And I’m very happy to say that we made it.

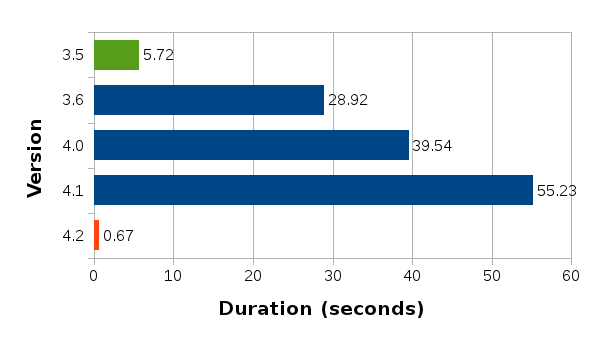

Benchmark

Since we are talking about performance, it won’t be complete without the actual numbers. So here goes.

Test document 1

Here is the first test document global500.ods. It contains 500 sheets, 12,500 global named ranges, and 12,500 formulas that reference them.

On my development machine, the last stable release 3.4.4 takes 14 seconds to open this document. While 14 seconds may not seem that slow, keep in mind that this machine is somewhat unfairly fast tailored for the abusive developer use, so the real world performance is likely much less impressive (you can probably multiply that number by 3 to get a rough idea of the real world performance). Anyhow, using the latest master branch on the same machine, this document opens roughly in 2 and a half seconds. That’s roughly 86% reduction in import time.

Test document 2

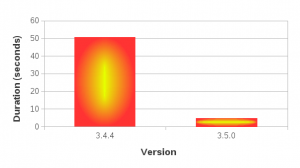

Here is the second, somewhat larger document global1000.ods. This document contains 1000 sheets, 25,000 named ranges and 25,000 formulas that reference them.

According to my benchmark performed in the same condition as the first document, 3.4.4 opens this document in 50 seconds, whereas in 3.5.0 it opens under 5 seconds. That’s about 90% reduction in import time. Pretty impressive!

Real power of open source

This story shows another aspect of this remarkable achievement worth mentioning. If you use an open source product such as LibreOffice in your business, and if it doesn’t perform the way you need it to, you can actually join the project as a developer and coordinate the effort with the upstream developers to make it happen. And depending on the nature of the change you want to see happen, it can happen very quickly as this story demonstrates.

I wanted to emphasize this because, while more and more businesses and institutions are embracing open source software, many of them tend to focus too much on the cost-saving aspect of it, thereby developing the wrong mindset that that’s what open source is all about. It isn’t. The real power of using open source software in your deployment is it gives you the ability to join and contribute to the project to influence the direction of its development. That gives you real flexibility in planning, and in my opinion the best way to harness the power of using open source software. The monetary cost-saving side of the benefit comes as a side effect but should be thought of only as an added bonus, not the primary reason for deploying open source software.