It turns out that pretending to write an export filter, at least adding a new entry to the Export dialog, is quite easy. In fact, you don’t even have to write a single line of code. Here is what to do.

Suppose you do your own build, and you have installed the OO.o that you have built. Now, go back to your build tree, and change directory into the following location

filter/source/config/fragments

and add the following two new files relative to this location:

./filters/calc_Kohei_SDF_Filter.xcu

./filters/calc_Kohei_SDF_ui.xcu

You can name your files anyway you want, of course. ;-) Anyway, put the following XML fragments into these files:

<!-- calc_Kohei_SDF_Filter.xcu -->

<node oor:name="calc_Kohei_SDF_Filter" oor:op="replace">

<prop oor:name="Flags"><value>EXPORT ALIEN 3RDPARTYFILTER</value></prop>

<prop oor:name="UIComponent"/>

<prop oor:name="FilterService"><value>com.sun.star.comp.packages.KoheiSuperDuperFileExporter</value></prop>

<prop oor:name="UserData"/>

<prop oor:name="FileFormatVersion"/>

<prop oor:name="Type"><value>Kohei_SDF</value></prop>

<prop oor:name="TemplateName"/>

<prop oor:name="DocumentService"><value>com.sun.star.sheet.SpreadsheetDocument</value></prop>

</node>

<!-- calc_Kohei_SDF_Filter_ui.xcu -->

<node oor:name="calc_Kohei_SDF_Filter">

<prop oor:name="UIName"><value xml:lang="x-default">Kohei Super Duper File Format</value>

<value xml:lang="en-US">Kohei Super Duper File Format</value>

<value xml:lang="de">Kohei Super Duper File Format</value>

</prop>

</node> |

<!-- calc_Kohei_SDF_Filter.xcu -->

<node oor:name="calc_Kohei_SDF_Filter" oor:op="replace">

<prop oor:name="Flags"><value>EXPORT ALIEN 3RDPARTYFILTER</value></prop>

<prop oor:name="UIComponent"/>

<prop oor:name="FilterService"><value>com.sun.star.comp.packages.KoheiSuperDuperFileExporter</value></prop>

<prop oor:name="UserData"/>

<prop oor:name="FileFormatVersion"/>

<prop oor:name="Type"><value>Kohei_SDF</value></prop>

<prop oor:name="TemplateName"/>

<prop oor:name="DocumentService"><value>com.sun.star.sheet.SpreadsheetDocument</value></prop>

</node>

<!-- calc_Kohei_SDF_Filter_ui.xcu -->

<node oor:name="calc_Kohei_SDF_Filter">

<prop oor:name="UIName"><value xml:lang="x-default">Kohei Super Duper File Format</value>

<value xml:lang="en-US">Kohei Super Duper File Format</value>

<value xml:lang="de">Kohei Super Duper File Format</value>

</prop>

</node>

Likewise, create another file:

./types/Kohei_SDF.xcu

with the following content

<!-- Kohei_SDF.xcu -->

<node oor:name="Kohei_SDF" oor:op="replace" >

<prop oor:name="DetectService"/>

<prop oor:name="URLPattern"/>

<prop oor:name="Extensions"><value>koheisdf</value></prop>

<prop oor:name="MediaType"/>

<prop oor:name="Preferred"><value>false</value></prop>

<prop oor:name="PreferredFilter"><value>calc_Kohei_SDF_Filter</value></prop>

<prop oor:name="UIName"><value xml:lang="x-default">Kohei Super Duper File Format</value></prop>

<prop oor:name="ClipboardFormat"><value>doctype:Workbook</value></prop>

</node> |

<!-- Kohei_SDF.xcu -->

<node oor:name="Kohei_SDF" oor:op="replace" >

<prop oor:name="DetectService"/>

<prop oor:name="URLPattern"/>

<prop oor:name="Extensions"><value>koheisdf</value></prop>

<prop oor:name="MediaType"/>

<prop oor:name="Preferred"><value>false</value></prop>

<prop oor:name="PreferredFilter"><value>calc_Kohei_SDF_Filter</value></prop>

<prop oor:name="UIName"><value xml:lang="x-default">Kohei Super Duper File Format</value></prop>

<prop oor:name="ClipboardFormat"><value>doctype:Workbook</value></prop>

</node>

Once these new files are in place, add these files to fcfg_calc.mk so that the build process can find them. To add, open fcfg_calc.mk and add Kohei_SDF to the end of T4_CALC, calc_Kohei_SDF_Filter to F4_CALC, and calc_Kohei_SDF_Filter_ui to F4_UI_CALC. Save the file and rebuild the module. This should rebuild the following configuration files (build done on Linux):

./unxlngi6.pro/misc/filters/modulepacks/fcfg_calc_types.xcu

./unxlngi6.pro/misc/filters/modulepacks/fcfg_calc_filters.xcu

./unxlngi6.pro/bin/fcfg_langpack_en-US.zip

One note: the language pack zip package should contain the file named Filter.xcu with the new UI string you just put in. If you don’t see that, remove the whole unxlngi6.pro directory and build the module again.

Now it’s time to update your installation. You need to update the following files:

<install_dir>/share/registry/modules/org/openoffice/TypeDetection/Filter/fcfg_calc_filters.xcu

<install_dir>/share/registry/modules/org/openoffice/TypeDetection/Types/fcfg_calc_types.xcu

with the new ones you just rebuilt. Next, unpack the langpack zip file and extract Filter.xcu. Place this file in

<install_dir>/share/registry/res/en-US/org/openoffice/TypeDetection/Filter.xcu

to replace the old one.

Ok so far? There is one more thing you need to do to complete the process. Since these configuration files are cached, in order for the updated configuration files to take effect, the cached data must be removed. The cached data is in the user configuration directory, so you need to locate and delete the following directory:

rm -rf <user_config_dir>/user/registry/cache

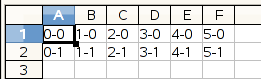

That’s it! Now, fire up Calc and launch the Export dialog. You see the new file format entry you just put in. :-)

Just try not to export your file using this new filter for real, because that will utterly fail. ;-)